Voice -- the new modality for Self-Service - How Popular?

Where It Works—and Where It Doesn’t

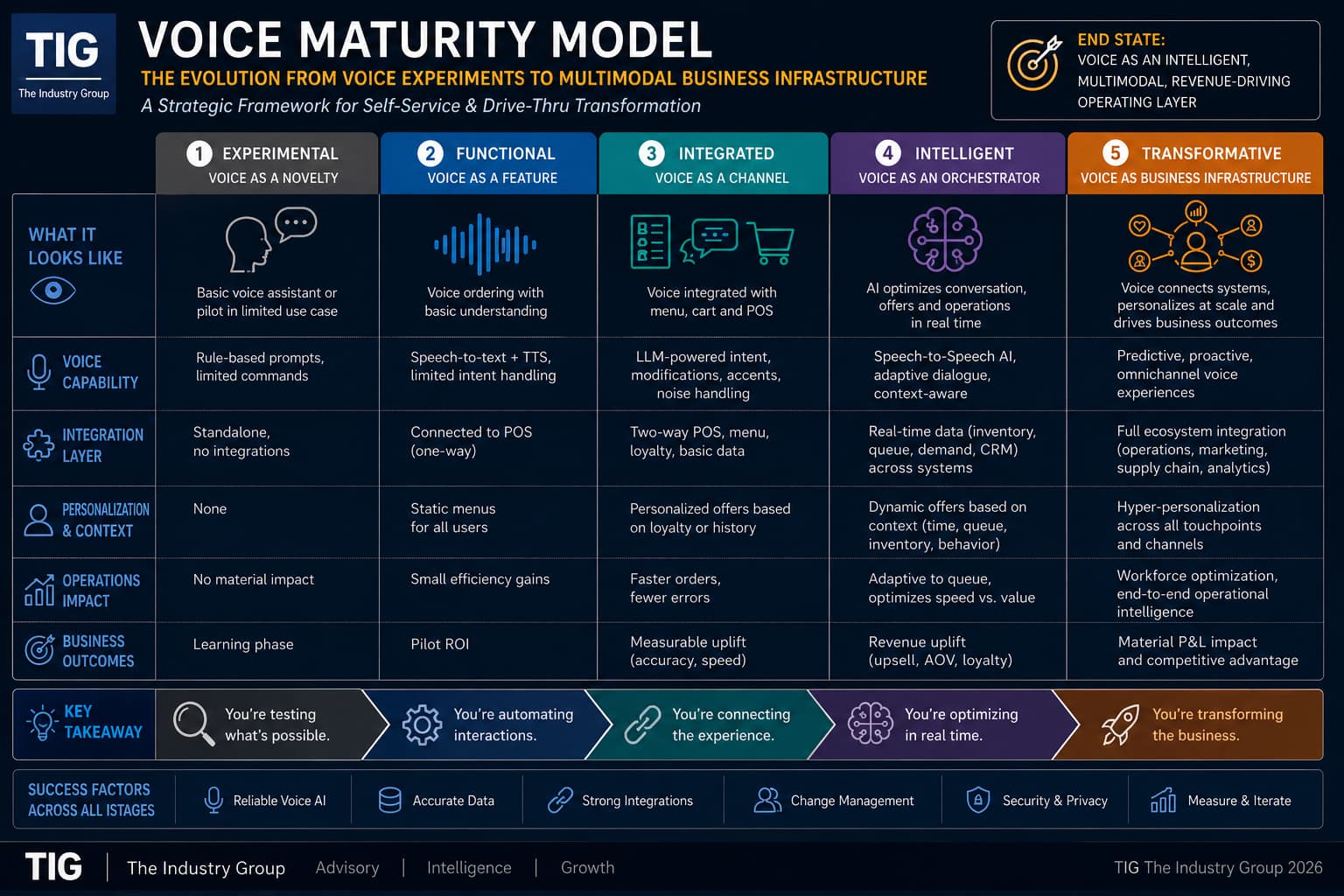

Voice is rapidly emerging as a new interface layer in self-service—but adoption is not happening evenly across use cases. The Industry Group has posted overview of Voice in self-service and included is VAIMS scoring and worksheet assessment for voice readiness.

The assumption that voice will replace touchscreens across kiosks is incorrect. In reality, voice is gaining traction in environments that are high-volume, time-sensitive, and constrained by labor.

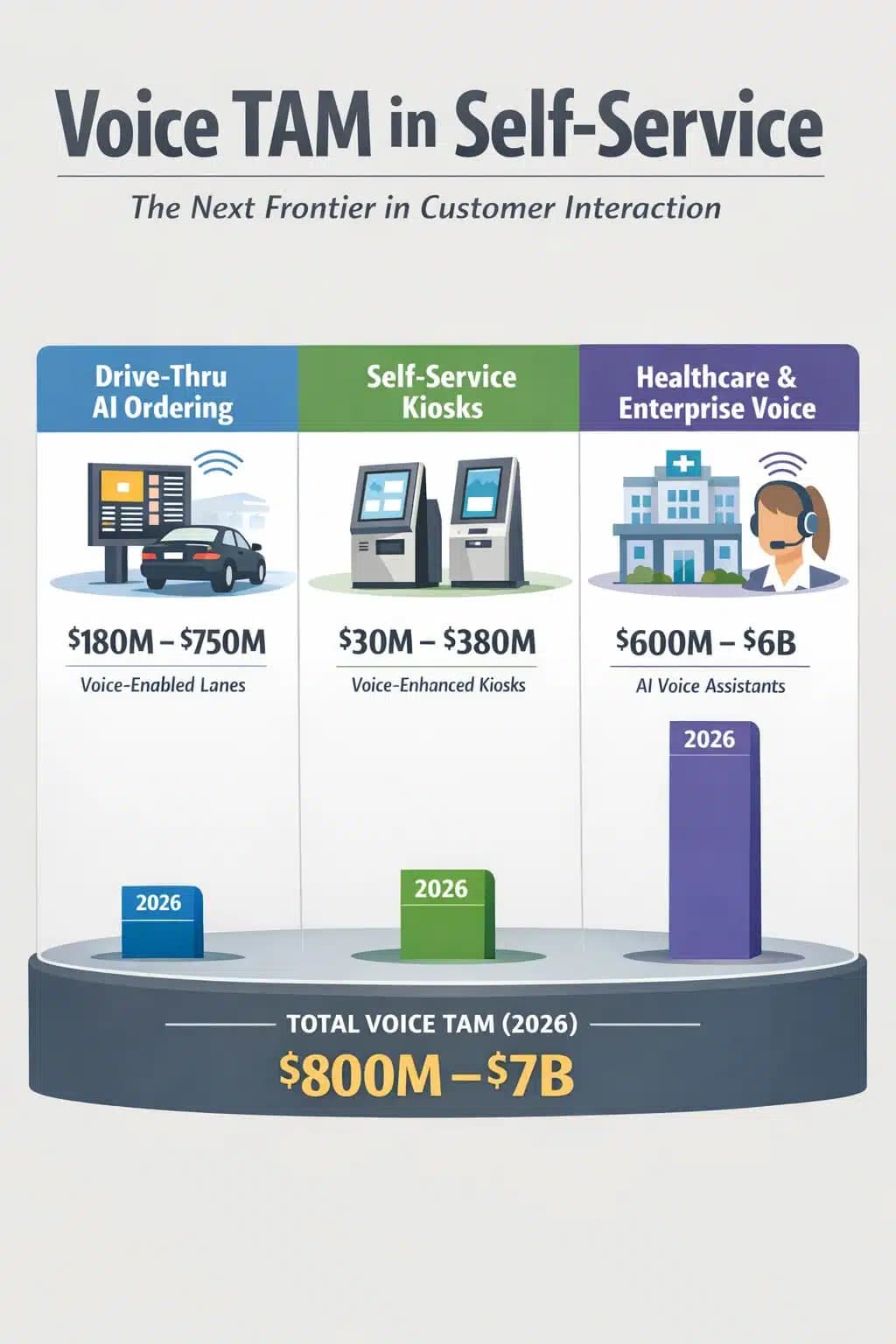

The clearest example is the quick-service restaurant drive-thru. Here, voice aligns with operational needs—fast ordering, minimal friction, and limited decision complexity. It is no surprise this segment is leading adoption.

Beyond QSR, voice is gaining ground in service triage applications such as healthcare intake and enterprise support. In these environments, the value is less about convenience and more about workflow efficiency—reducing human intervention while maintaining throughput.

The AVIXA Angle: Voice Is an AV + Data Problem

For the pro AV community, the shift to voice introduces a different kind of design challenge.

Voice is not just an interface—it is a systems integration problem spanning audio, visual, and data layers.

1. Audio Capture Is Mission-Critical

- Far-field microphones must perform in:

- outdoor environments (drive-thru noise, weather)

- high ambient noise spaces (retail, healthcare)

- Beamforming, echo cancellation, and noise suppression become core infrastructure—not optional features

👉 Poor audio = failed experience. Every time.

2. Visual Systems Become More Important, Not Less

Voice increases reliance on visual confirmation layers:

- Digital menu boards

- Real-time order displays

- Dynamic signage for upsell and personalization

👉 This reinforces a key point for AV integrators:

Voice drives more demand for digital signage, not less.

3. Real-Time Content Synchronization

Voice systems must stay in sync with:

- pricing

- promotions

- inventory

- customer context

That requires:

- CMS platforms (like digital signage networks)

- API-driven data flows

- low-latency updates across screens

👉 This is where AV meets enterprise IT.

4. Multimodal Experience Design

The winning deployments are not “voice-first”—they are multimodal by design:

- Voice for input

- Visuals for confirmation and persuasion

- Data systems for orchestration

For AV professionals, this shifts the role from:

- display deployment

to - experience orchestration

5. Edge Compute and AI Processing

Voice systems increasingly rely on:

- edge AI (for latency and reliability)

- local processing for speech and vision

- hybrid cloud architectures

👉 This brings AV infrastructure closer to compute infrastructure

Where Voice Still Struggles

Inside traditional kiosks—retail browsing, wayfinding, and complex transactions—voice remains situational.

Customers need:

- comparison

- exploration

- confidence

These are still better served visually.

Bottom Line

Voice is not replacing touch or screens.

It is expanding the interface stack.

The real opportunity for the AV industry is not microphones—it is:

- integrating voice into digital signage ecosystems

- synchronizing content and AI decisioning

- designing environments where audio, visual, and data operate as one

Final Word

Voice is not the future of self-service on its own.

But as part of a multimodal system, it is becoming essential.

The winners won’t be those who deploy voice.

They will be those who integrate it into the operating model.

Addendum

Voice in Self-Service original article — Concise Outline

1. Core

- Voice is emerging as a new modality layer

- Adoption is real—but highly uneven

- Not a kiosk story → an operations story

2. Where Voice Is Scaling (Ranked)

#1 Drive-Thru (Dominant)

- Clear market leader

- High volume + constrained workflows

- Voice becoming default interface

#2 Service Triage (Healthcare / Enterprise)

- Intake, routing, support automation

- Voice = front door filter

#3 In-Store / Kiosk (Selective)

- Limited adoption

- Works only in narrow use cases

3. Why Drive-Thru Wins

- Fixed menu → predictable vocabulary

- Linear workflow → low complexity

- Labor pressure → immediate ROI

- Customers already trained for voice

👉 Only segment approaching true scale deployment

4. Why Kiosks Lag

- Browsing vs execution problem

- Need for:

- visual confirmation

- comparison

- user confidence

👉 Voice alone cannot replace screen-based UX

5. Key Inflection Point

- Touchscreens have peaked

- Voice + gesture = next wave of interaction

- But not replacement → additive modalities

6. Operational Reality (Critical Insight)

Voice success depends on:

- backend integration (POS, pricing, inventory)

- workflow orchestration

- error handling + fallback

👉 Not a UI problem → system design problem

7. Emerging Model

- Shift toward multimodal stack

- voice + visual + data + AI

- Voice becomes:

- input layer

- routing layer

- optimization layer

👉 Industry moving beyond single-interface design

8. Bottom Line

- Biggest growth is before the kiosk, not at it

- Drive-thru = primary voice interface

- Enterprise/healthcare = next wave

- Kiosks = multimodal only

Voice will not replace touch

It will sit alongside it as part of a broader system

Please sign in or register for FREE

If you are a registered user on AVIXA Xchange, please sign in